Are these datasets the same?

Two-sample testing for data scientists

Danica J. Sutherland(she)

University of British Columbia (UBC) / Alberta Machine Intelligence Institute (Amii)

Pacific Conference on Artificial Intelligence – April 2, 2023

(Swipe or arrow keys to move through slides; for a menu to jump; to show more.)

Data drift

- Textbook machine learning:

- Train on i.i.d. samples from some distribution,

- If it works on , probably works on new samples from

- Really:

- Train on

- Pretend there's a that is an i.i.d. sample from

- If it works on , maybe it sorta works on

- Deploy on something that's maybe a distribution

- which might be sort of like

- but probably changes over time…

This talk

Based on samples and :

- How is different from ?

- Is close enough to for our model?

- Is ?

Two-sample testing

- Given samples from two unknown distributions

- Question: is ?

- Hypothesis testing approach:

- Reject null hypothesis if test statistic

- Do smokers/non-smokers get different cancers?

- Do Canadians have the same friend network types as Americans?

- When does my laser agree with the one on Mars?

- Are storms in the 2000s different from storms in the 1800s?

- Does presence of this protein affect DNA binding? [MMDiff2]

- Are these neurons' behavior affected by this odor?

- Do these dob and birthday columns mean the same thing?

- Does my generative model match ?

- Independence testing: is ?

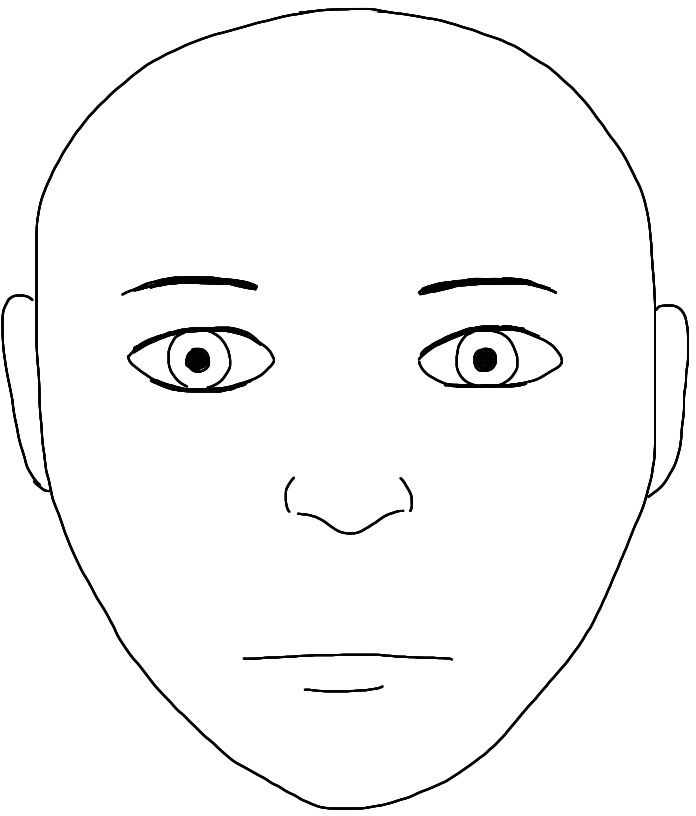

What's a hypothesis test again?

Permutation testing to find

Need

: th quantile of

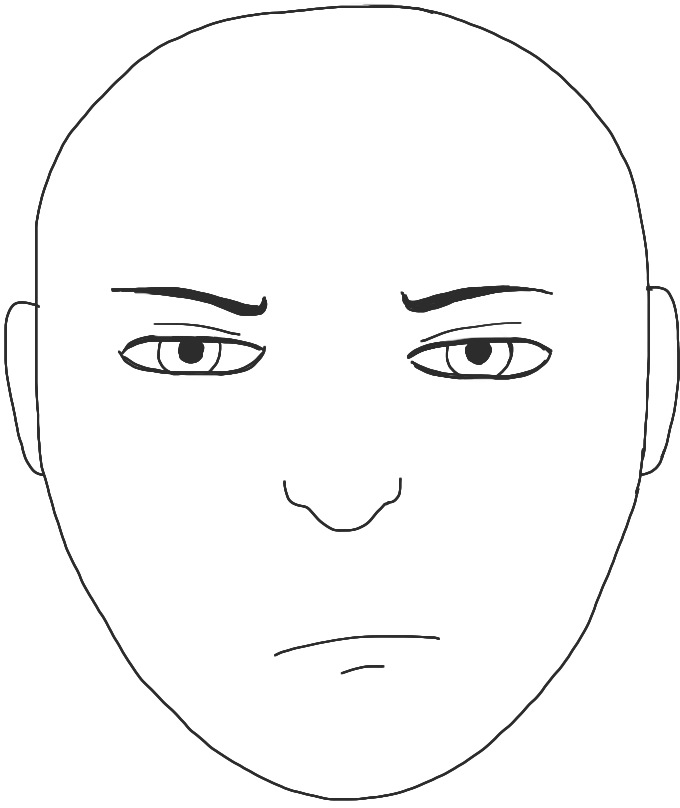

Classifier two-sample tests

- We need a that's large if , small if

- Can choose as the accuracy of on the test set

- If , classification is impossible, and so

- Usually performs better:

- If , normal distribution (but permuting on test set is better)

A more general framework

- C2ST-L:

- is a classifier's “logit”:

log probability is from rather than , plus const

- is a classifier's “logit”:

- Basically the same:

- What if we use more general features of the data?

Difference between mean embeddings

Only use data through : can kernelize!

|  |  | |

|---|---|---|---|

| 1.0 | 0.2 | 0.6 |

| 0.2 | 1.0 | 0.5 |

| 0.6 | 0.5 | 1.0 |

|  |  | |

|---|---|---|---|

| 1.0 | 0.8 | 0.7 |

| 0.8 | 1.0 | 0.6 |

| 0.7 | 0.6 | 1.0 |

|  |  | |

|---|---|---|---|

| 0.3 | 0.1 | 0.2 |

| 0.2 | 0.3 | 0.3 |

| 0.2 | 0.1 | 0.4 |

What's a kernel again?

- Linear classifiers: ,

- Use a “richer” :

- Can avoid explicit ; instead

- “Kernelized” algorithms access data only through

- Induces a notion of “smoothness” on functions,

Reproducing Kernel Hilbert Space (RKHS)

- Example: Gaussian RBF / exponentiated quadratic / squared exponential / …

- Some functions with small :

Maximum Mean Discrepancy (MMD)

The max is achieved by

MMD-based tests

- If is characteristic, iff

- Efficient permutation testing for

- : converges in distribution

- : asymptotically normal

- Any characteristic kernel gives consistent test…eventually

- Need enormous if the kernel is bad for this problem!

Deep learning and deep kernels

- C2ST-L is basically MMD with

- is a (learned) deep net – a learned kernel

- We can generalize some more to deep kernels:

- is a deep net, maps data points to

- is a simple kernel on

- gives MMD as

Optimizing power of MMD tests

- Asymptotics of give us immediately that , , are constants: first term usually dominates

- Pick to maximize an estimate of

- Use from before, get from U-statistic theory

- Can show uniform convergence of estimator

Blobs dataset

Blobs kernels

Blobs results

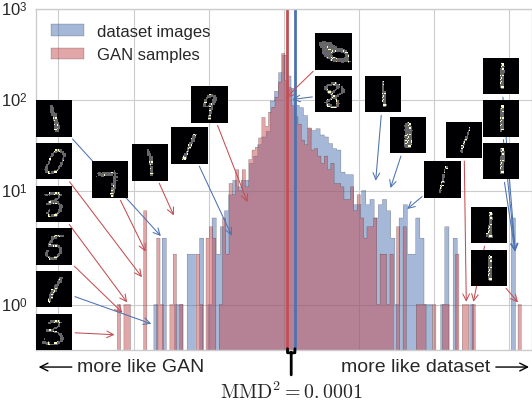

Investigating a GAN on MNIST

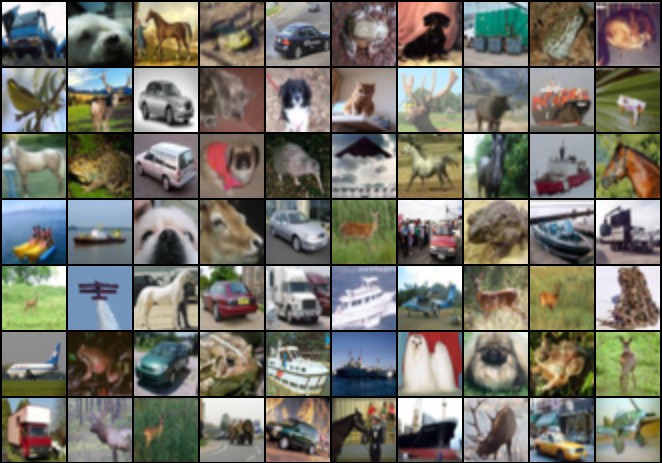

CIFAR-10 vs CIFAR-10.1

| ME | SCF | C2ST | MMD-O | MMD-D |

|---|---|---|---|---|

| 0.588 | 0.171 | 0.452 | 0.316 | 0.744 |

Ablation vs classifier-based tests

| Cross-entropy | Max power | |||||

|---|---|---|---|---|---|---|

| Dataset | Sign | Lin | Ours | Sign | Lin | Ours |

| Blobs | 0.84 | 0.94 | 0.90 | – | 0.95 | 0.99 |

| High- Gauss. mix. | 0.47 | 0.59 | 0.29 | – | 0.64 | 0.66 |

| Higgs | 0.26 | 0.40 | 0.35 | – | 0.30 | 0.40 |

| MNIST vs GAN | 0.65 | 0.71 | 0.80 | – | 0.94 | 1.00 |

But…

- What if you don't have much data for your testing problem?

- Need enough data to pick a good kernel

- Also need enough test data to actually detect the difference

- Best split depends on best kernel's quality / how hard to find

- Don't know that ahead of time; can't try more than one

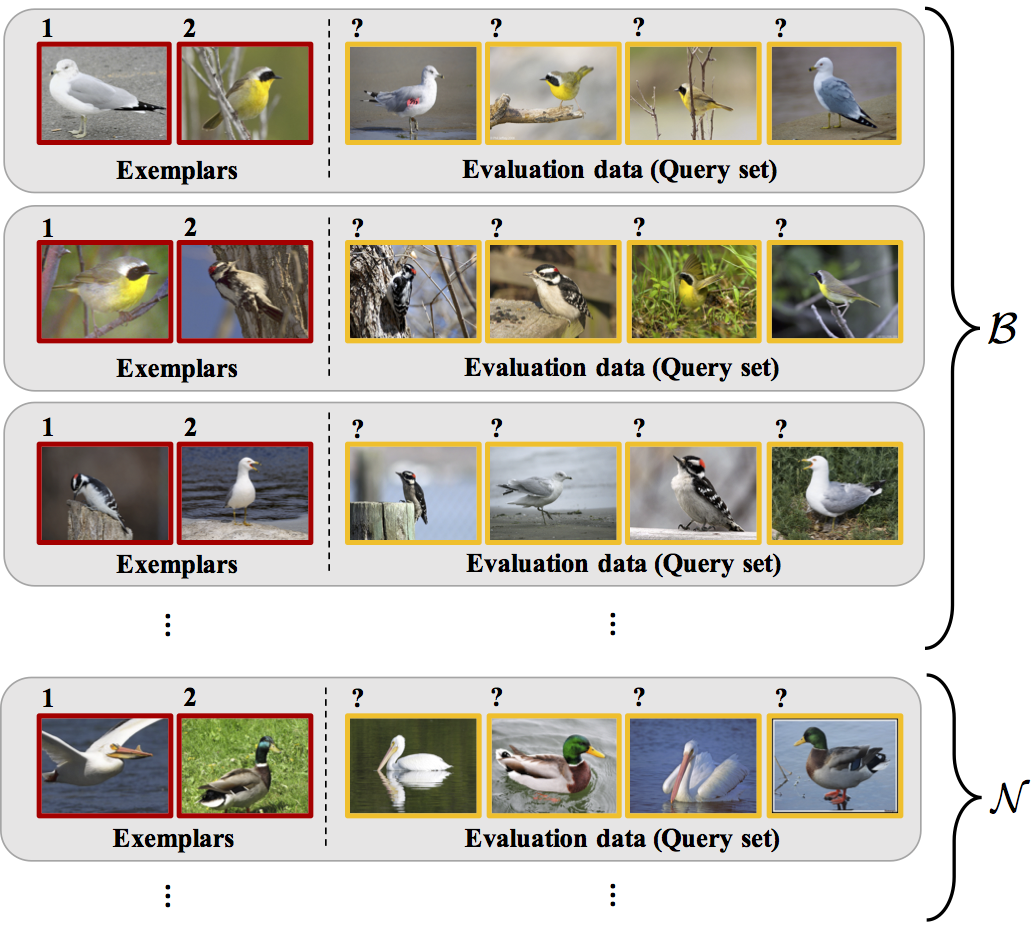

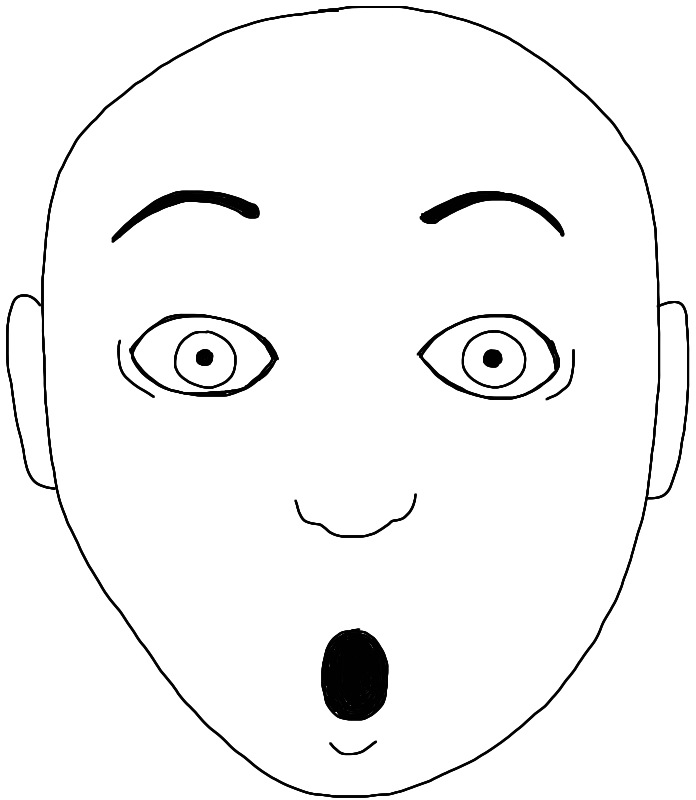

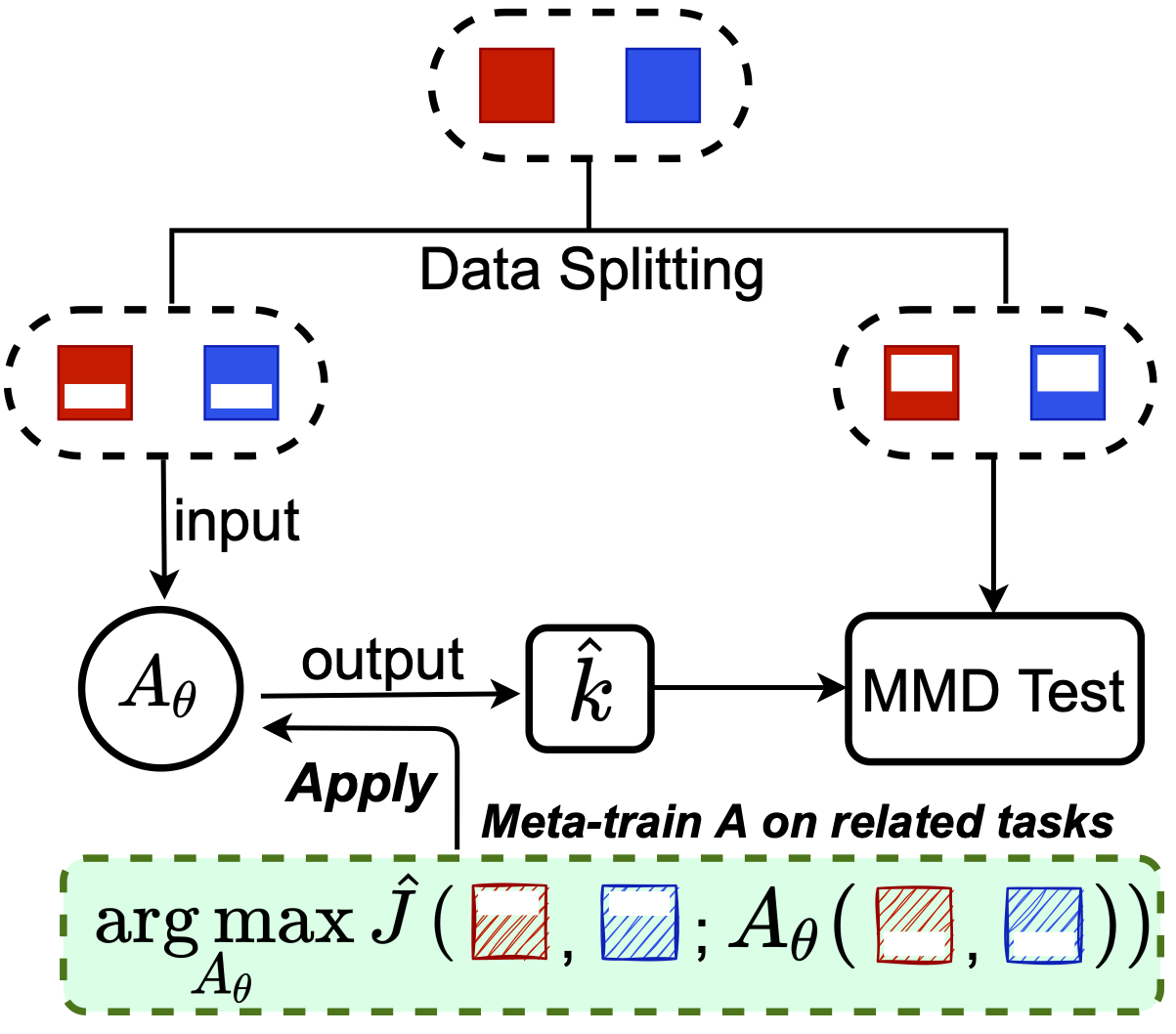

Meta-testing

- One idea: what if we have related problems?

- Similar setup to meta-learning:

![]() (from Wei+ 2018)

(from Wei+ 2018)

Meta-testing for CIFAR-10 vs CIFAR-10.1

- CIFAR-10 has 60,000 images, but CIFAR-10.1 only has 2,031

- Where do we get related data from?

- One option: set up tasks to distinguish classes of CIFAR-10

- airplane vs automobile, airplane vs bird, ...

One approach (MAML-like)

is, e.g., 5 steps of gradient descent

we learn the initialization, maybe step size, etc

we learn the initialization, maybe step size, etc

This works, but not as well as we'd hoped…

Initialization might work okay on everything, not really adapt

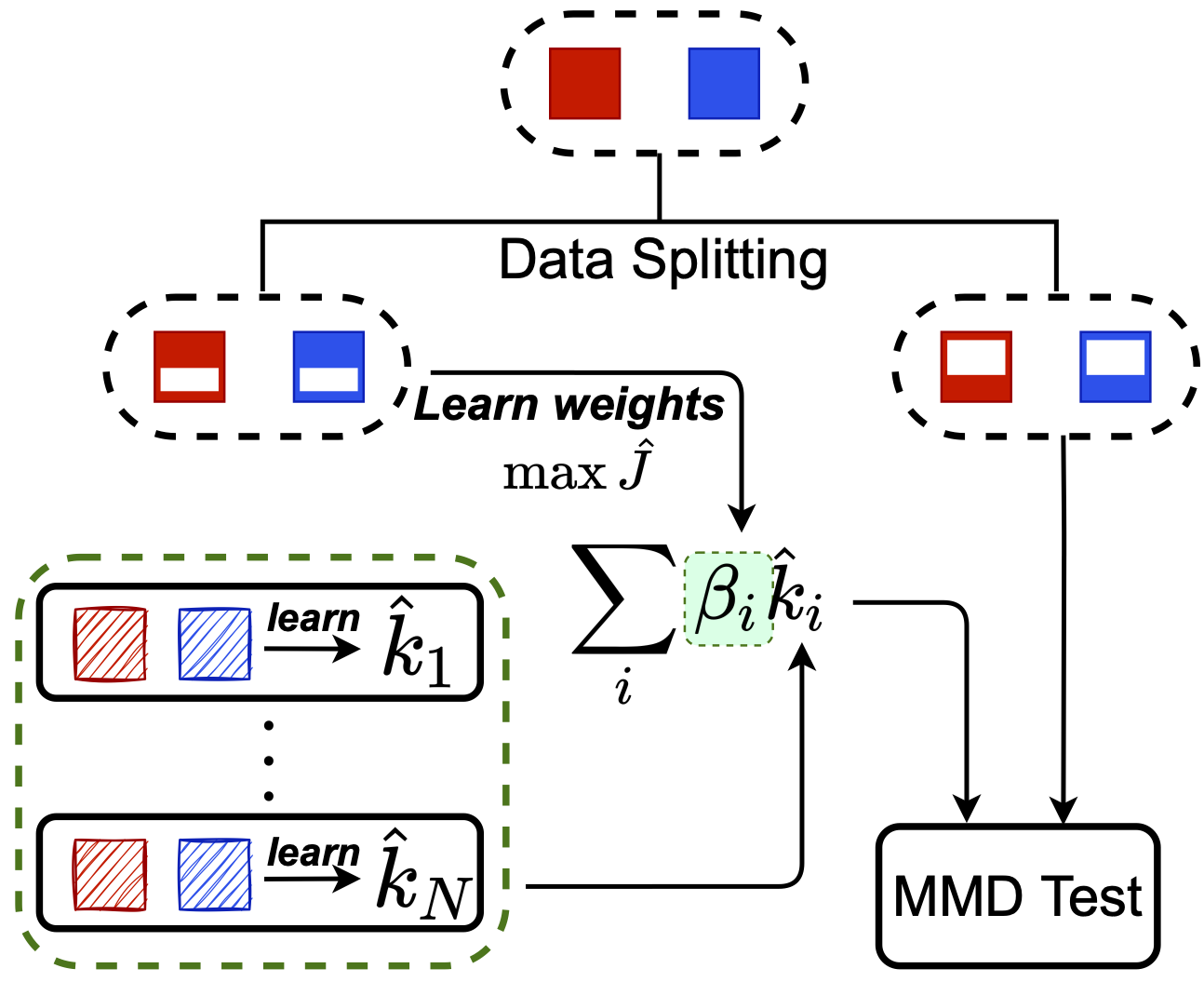

Another approach: Meta-MKL

Inspired by classic multiple kernel learning

Only need to learn linear combination on test task:

much easier

Only need to learn linear combination on test task:

much easier

Theoretical analysis for Meta-MKL

- Same big-O dependence on test task size 😐

- But multiplier is much better:

based on number of meta-training tasks, not on network size - Coarse analysis: assumes one meta-tasks is “related” enough

- We compete with picking the single best related kernel

- Haven't analyzed meaningfully combining related kernels (yet!)

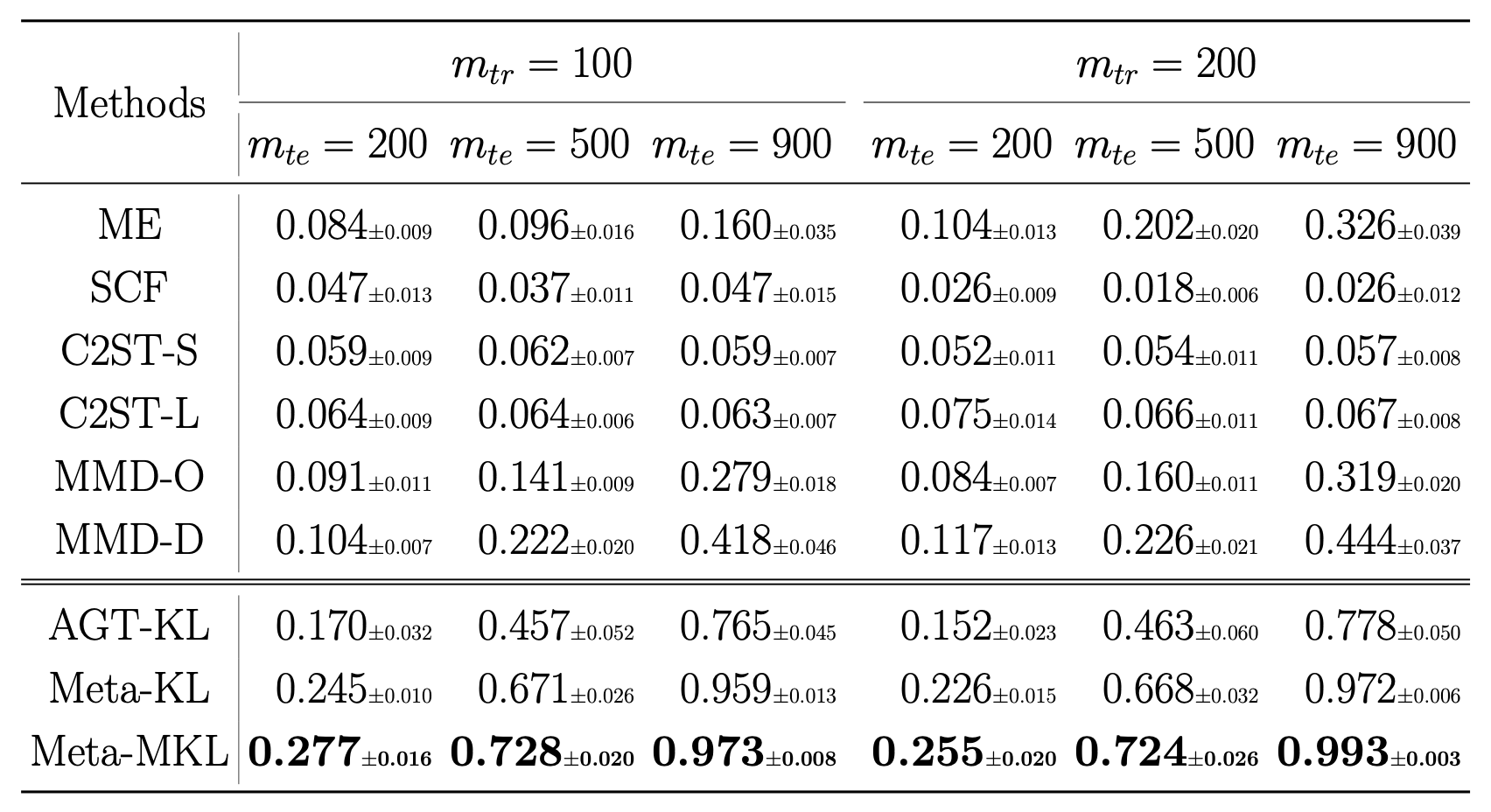

Results on CIFAR-10.1

But...

- Sometimes we already know there are differences we don't care about

- In the MNIST GAN criticism, first just picked out that the GAN outputs numbers that aren't one of the 256 values MNIST has

- Can we find a kernel that can distinguish from ,

but can't distinguish from ? - Also useful for fair representation learning

- e.g. can distinguish “creditworthy” vs not, but can't distinguish by race

High on one power, low on another

Choose with

- First idea:

- No good: doesn't balance power appropriately

- Second idea:

- Can estimate inside the optimization

- Better, but tends to “stall out” in minimizing

Block estimator [Zaremba+ NeurIPS-13]

- Use previous on blocks, each of size

- Final estimator: average of each block's estimate

- Each block has previous asymptotics

- Central limit theorem across blocks

- Power is

MMD-B-Fair

- Choose as

- is the power of a test with blocks of size

- We don't actually use a block estimator computationally

- , have nothing to do with minibatch size

- Representation learning:

- Deep kernel is

- could be deep itself, with adversarial optimization

- For now, just Gaussians with different lengthscales

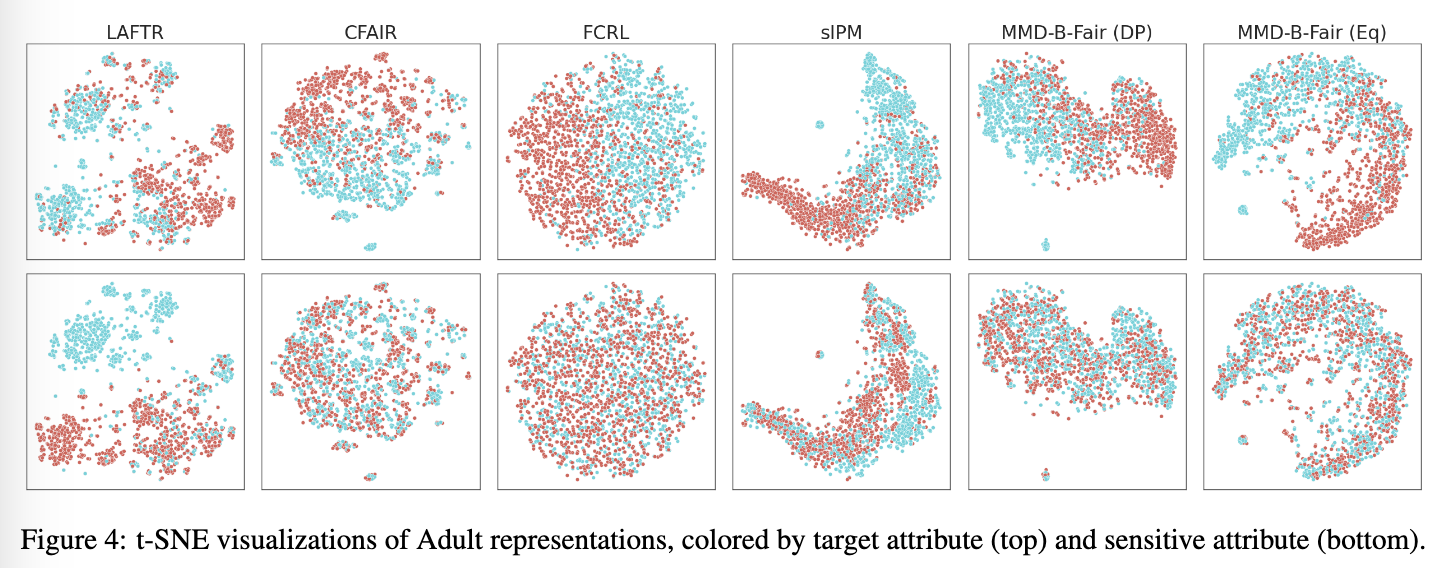

Learned representations

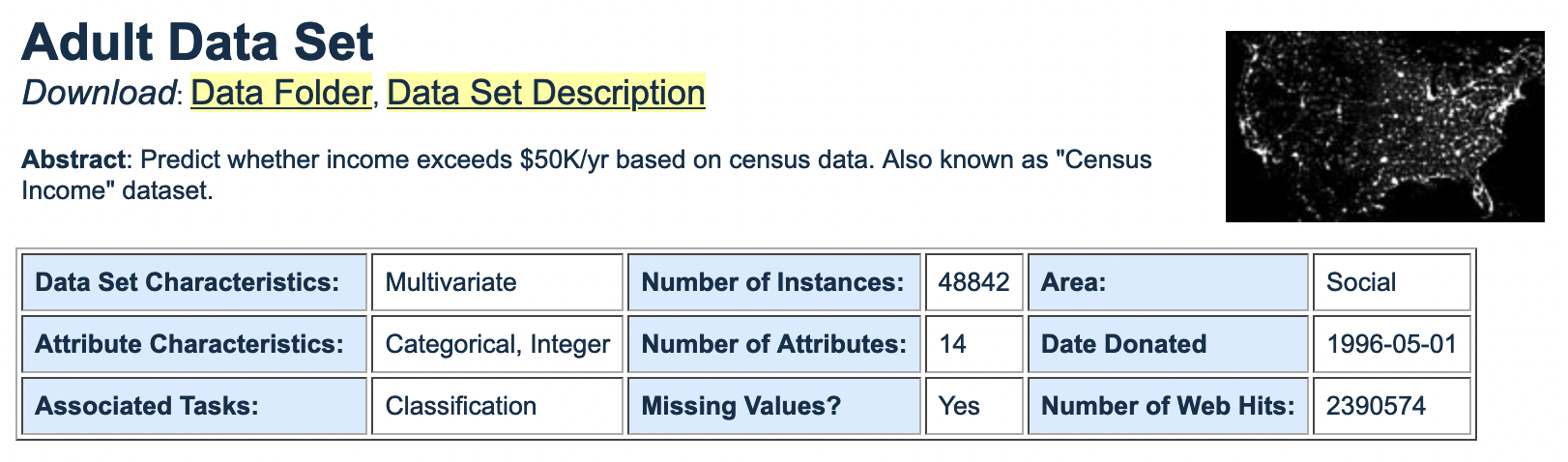

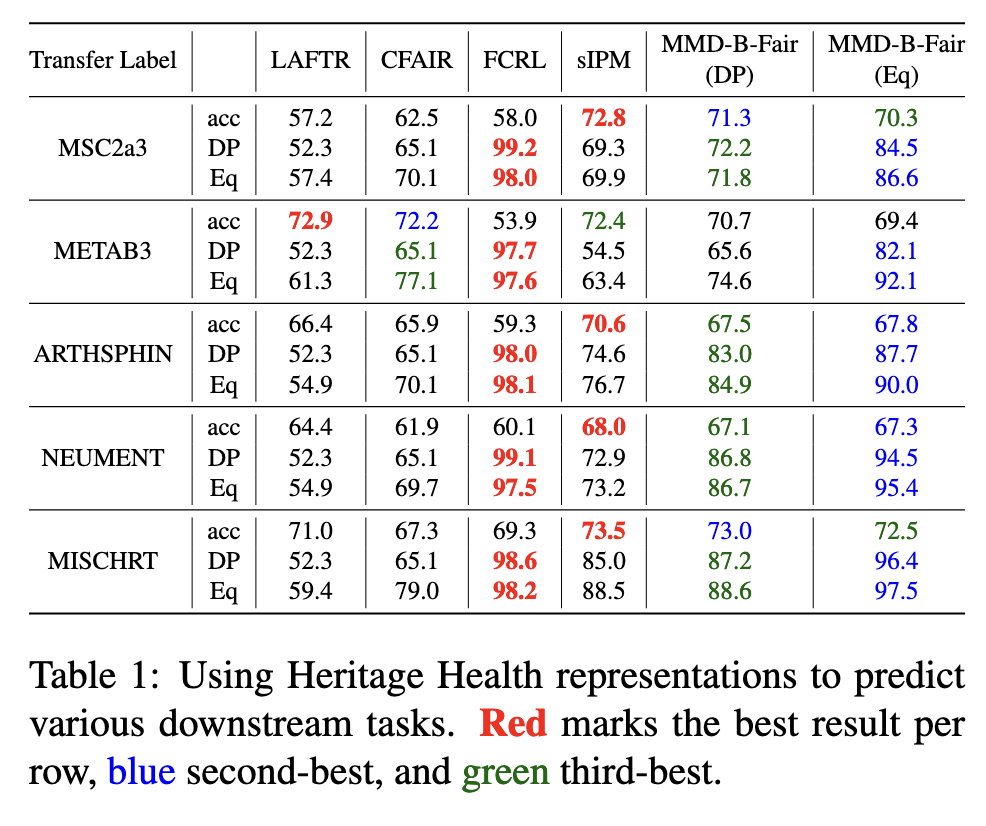

Quality of transfer learning

- Check if your data is different than it used to be!

- Pretty good method: train a classifier, check how accurate

- More powerful: use an optimized kernel method

A good takeaway

Combining a deep architecture with a kernel machine that takes the higher-level learned representation as input can be quite powerful.

— Y. Bengio & Y. LeCun (2007), “Scaling Learning Algorithms towards AI”